Anthropic Just Killed Tool Calling - Here's What You Need to Know

Traditional JSON-based tool calling is becoming obsolete. Anthropic's Set 46 release introduces programmatic tool calling - reducing token costs by 30-80% while improving agent accuracy. Learn how this fundamentally changes AI agent architecture and why it's likely to become the new industry standard.

The Tool Calling Problem

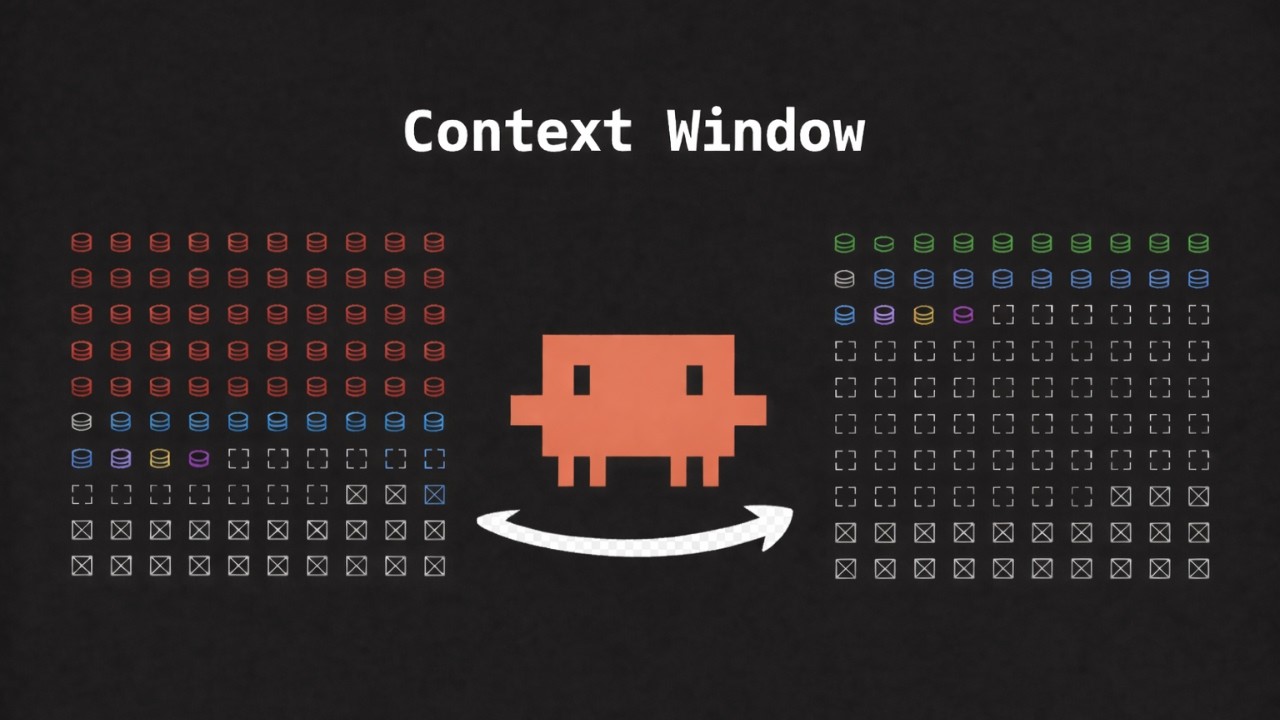

AI agents have been struggling with inefficient tool calling architectures that waste tokens and reduce accuracy. Traditional approaches load all tool definitions, intermediate results, and conversation history into the context window - polluting it with unnecessary data.

As shown at 2:15 in the video, this becomes especially problematic with protocols like MCP where multiple tools are available. Each tool call adds more data to the context window, creating a snowball effect that consumes valuable tokens with irrelevant information.

Context pollution problem: A typical agent might use 70-80% of its context window just for tool definitions and intermediate results, leaving little room for actual problem-solving. This forces developers to choose between expensive larger context windows or reduced functionality.

Programmatic Solution

Anthropic's programmatic tool calling solves this by letting agents write and execute code in a sandbox environment. Instead of JSON-based tool calling that loads everything into context, the agent:

- Identifies needed tools

- Writes code to invoke them in sequence

- Executes the code in a sandbox

- Only receives final processed results

This approach leverages LLMs' natural coding ability while avoiding their weakness with synthetic JSON formats. As the transcript explains at 4:30, "LLMs are trained on billions of lines of code... they can produce and understand code but barely any synthetic JSON tool calling formats."

Token savings: Cloudflare reported 30-80% reductions in token usage, while Anthropic saw 24% fewer input tokens alongside 11% accuracy improvements in benchmarks.

Industry Adoption Timeline

Programmatic tool calling didn't emerge overnight. The transcript reveals a clear progression:

- September 2025: Cloudflare publishes "Code Mode: The Better Way to Use MCP"

- November 2025: Anthropic releases "Code Execution with MCP" paper

- Late November 2025: Tropic adds advanced tool use including tool search

- December 2025: Open-source implementations emerge (Blocks Goose, LightLLM)

- February 2026: Anthropic makes programmatic calling GA with Set 46

This mirrors Anthropic's previous innovations like MCP and agent skills that became industry standards. At 6:45, the video notes: "Just like anything released by Anthropic, the usage kind of exploded within the open source community."

Benchmark Results

Anthropic tested programmatic tool calling on two key benchmarks with impressive results:

BrowserComp: Tests navigation ability across websites. Sonnet improved from 33% to 46% accuracy (13 point gain) while using fewer tokens.

The second benchmark, Deep Search QA (finding multiple correct answers), saw F1 scores improve from 52% to 59% for Sonnet 46. Both benchmarks demonstrated that programmatic calling doesn't just save tokens - it actually improves agent capabilities.

However, as noted at 9:20 in the video: "Token cost will vary depending on how much code the model needs to write... OPUS 46 saw increased price-weighted tokens because it wrote more filtering code." This highlights the importance of balancing code complexity against token savings.

Implementation Details

For web search (one of the first generally available implementations), Anthropic automatically applies dynamic filtering when:

- Using their search API

- Data fetching is enabled

- The agent writes filtering code

The transcript explains at 10:30: "Claude writes code to do post-processing on query results... only putting relevant results in the context window." This happens before injection, preventing pollution.

For custom tools, developers provide:

- Tool name and description

- Input/output schemas

- Code execution capability

Anthropic provides detailed documentation and cookbooks, making adoption easier. As with previous innovations, this will likely see rapid ecosystem adoption.

Watch the Full Tutorial

For a deeper dive into programmatic tool calling, including specific examples of dynamic filtering in action (demonstrated at 7:15), watch the full video tutorial below.

Key Takeaways

Programmatic tool calling represents a fundamental shift in how AI agents interact with tools. By leveraging LLMs' natural coding ability instead of forcing JSON-based calling, developers can achieve both cost savings and accuracy improvements.

In summary: Anthropic's approach reduces token costs by 30-80%, improves accuracy by up to 13%, and will likely become an industry standard - just as their previous innovations have. Early adopters can gain significant competitive advantage.

Frequently Asked Questions

Common questions about programmatic tool calling

Programmatic tool calling is a new approach where AI agents write and execute code to invoke specific tools in a sandbox environment, rather than using traditional JSON-based tool calling.

This method reduces token usage by 30-80% while improving accuracy, since LLMs are better at writing code than parsing synthetic JSON formats.

- Eliminates context window pollution

- Leverages LLMs' natural coding ability

- Only returns final processed results

Traditional tool calling loads all tool definitions and intermediate results into the context window. Programmatic calling keeps intermediate processing within a sandbox, only returning final results to the LLM.

Anthropic saw 24% fewer input tokens and 11% accuracy improvements in benchmarks. Cloudflare reported even greater savings of 30-80% in some implementations.

- No tool definition overhead

- Intermediate results stay in sandbox

- Only relevant data enters context

Anthropic, Cloudflare, and Tropic have all implemented programmatic tool calling. Cloudflare reported 30-80% token savings, while Anthropic's Sonnet 46 shows 13% accuracy improvements in browser navigation benchmarks.

OpenAI's GPT 5.2 also added support for 20+ tools using this method, and Google's Gemini has offered similar capabilities since Gemini 2.0.

- Anthropic leads with Set 46 release

- Cloudflare showed early success

- Open-source projects rapidly adopting

Not always. Anthropic found that while Sonnet 46 reduced price-weighted tokens, OPUS 46 saw increased costs because it wrote more filtering code.

The tradeoff depends on how much code the model writes versus how much irrelevant data it filters. Complex filtering may increase costs despite reducing final output tokens.

- Code writing has its own cost

- Simple filters save most tokens

- Balance complexity against savings

BrowserComp (testing navigation ability) showed Sonnet improving from 33% to 46% accuracy. Deep Search QA (finding multiple correct answers) improved F1 scores from 52% to 59%.

Both benchmarks saw significant token reductions alongside accuracy gains. BrowserComp's 13-point accuracy jump is particularly notable - equivalent to a major model upgrade.

- BrowserComp: +13% accuracy

- Deep Search QA: +7% F1

- 24% fewer input tokens

For web search, simply enable data fetching in Anthropic's search API - the model will automatically use dynamic filtering.

For custom tools, provide tool definitions with code execution capabilities in a sandbox environment. Anthropic provides detailed documentation and cookbooks for implementation.

- Web search: Enable data fetching

- Custom tools: Define with code execution

- Use provided sandbox environment

Given Anthropic's track record with MCP and agent skills (which became industry standards), and rapid adoption by Cloudflare, Tropic, and open-source projects like LightLLM, programmatic tool calling will likely see wide adoption.

OpenAI and Google have already implemented similar capabilities, suggesting this approach will become the norm for efficient agent architectures.

- Follows MCP adoption pattern

- Multiple major players implementing

- Solves fundamental efficiency problem

GrowwStacks helps businesses implement programmatic tool calling and other AI agent optimizations. We design custom solutions that reduce token costs while improving accuracy, integrating with your existing tools.

Our team can implement Anthropic's latest features or build hybrid solutions combining multiple providers. We'll analyze your specific workflows to maximize efficiency gains from these new approaches.

- Custom programmatic calling implementations

- Token cost reduction analysis

- Free 30-minute consultation

Ready to Reduce Your AI Agent Costs by 30-80%?

Traditional tool calling wastes tokens and reduces accuracy. GrowwStacks can implement Anthropic's programmatic calling to optimize your AI workflows.