Scrape Any Website &

Automate with Browse.ai

We build AI-powered web scraping pipelines using Browse.ai, Python scripts, and RSS feeds — extracting full article content, product data, and competitor information and piping it directly into Make.com, n8n, ChatGPT, and your publishing or CRM platforms automatically.

3 Ways We Scrape & Automate the Web

We choose the right scraping method based on your target website, the data you need, and where that data needs to go — building end-to-end pipelines that run automatically.

Browse.ai — AI-Powered Scraping

Browse.ai is an AI-powered no-code scraper that can extract clean, structured content from virtually any website — including dynamic JavaScript-rendered pages that standard RSS feeds or basic scrapers cannot handle. We create Browse.ai actors tailored to your target URL, configure the data extraction schema, and connect the output directly to Make.com for downstream automation. Clean text, no HTML noise, fully automated.

Python Custom Scraping Scripts

For websites that require custom logic — login sessions, paginated results, anti-bot bypass, or deeply nested data structures — we write Python scraping scripts using BeautifulSoup, Scrapy, Playwright, or Selenium. These scripts run on a schedule or on demand, extracting exactly the data you need and pushing it into Google Sheets, Airtable, a database, or any downstream automation platform.

RSS Feed, Make.com & n8n

RSS feeds provide titles and summaries but rarely the full article content. We combine RSS feed monitoring in Make.com with Browse.ai or Python to fetch the complete article — extracting full body text, images, and metadata before passing to ChatGPT for reformatting or summarisation. For teams needing self-hosted, privacy-first pipelines with no per-operation costs, n8n orchestrates the same RSS-to-scrape-to-publish flow on your own infrastructure with unlimited executions.

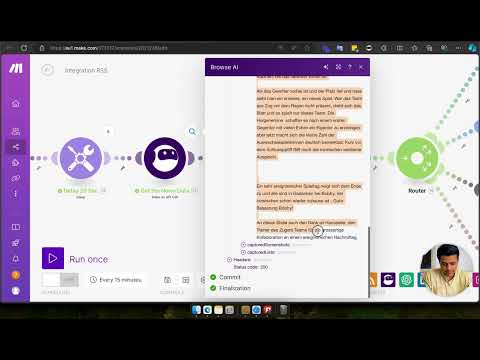

Watch Our Browse.ai Scraping Demo

A full walkthrough of a real Browse.ai automation — extracting complete article content beyond RSS limitations, passing it through ChatGPT, generating images, and publishing across all social media platforms automatically.

What We Build With Browse.ai

Real web scraping and content automation pipelines we have delivered for media companies, marketing agencies, e-commerce brands, and research teams.

Full Article Scraping Beyond RSS Limits

RSS feeds only return titles and short summaries. We use Browse.ai actors to scrape the full article content from the source URL — extracting clean body text without HTML tags, ads, or navigation elements. This complete content is then passed into ChatGPT for reformatting and auto-published to WordPress or social media platforms automatically.

Scrape → ChatGPT → Social Media Pipeline

We build end-to-end content pipelines where Browse.ai scrapes the full article, Make.com passes the content to ChatGPT to generate a rewritten or summarised version, DALL-E or Ideogram generates a matching image, and the final post is published automatically across all connected social media platforms — all triggered from a single URL or RSS event.

Competitor & Product Price Monitoring

We build Browse.ai actors that monitor competitor websites or marketplaces on a schedule — extracting product names, prices, stock availability, and ratings. All scraped data is stored in Google Sheets or Airtable, and alerts are triggered via Slack or email whenever prices change beyond a defined threshold — giving your team real-time competitive intelligence.

Structured Data Extraction to Google Sheets

We configure Browse.ai to extract structured data — listings, directories, profiles, job postings, or any repeating data pattern — from target websites and push the results directly into Google Sheets or Airtable in clean, column-organised format. Scheduled runs ensure the data stays fresh without anyone having to manually visit the website and copy data.

News & Trend Monitoring Automation

We build news monitoring pipelines that watch industry websites, news aggregators, and RSS feeds for new content matching specific keywords or topics. When a matching article is found, Browse.ai extracts the full content, ChatGPT summarises it, and a briefing is automatically delivered to Slack, email, or a shared Google Sheet — keeping your team informed without manual research.

Custom Python Scraping for Complex Sites

For websites requiring authentication, multi-step navigation, or JavaScript rendering beyond what Browse.ai handles, we write custom Python scraping scripts using Playwright or Selenium. These scripts handle login flows, paginated results, infinite scroll, and dynamic content — extracting exactly the data you need on a reliable schedule and pushing it to any destination.

Browse.ai Integrations We Build

From content management systems and AI platforms to databases and communication tools — we connect Browse.ai scraped data with every platform your business needs.

Ready to Automate Your Web Scraping?

Tell us what data you need, which websites you want to monitor, and where that data should go — and we'll build a custom scraping pipeline that runs automatically on your schedule. Free consultation, no commitment.