What This Workflow Does

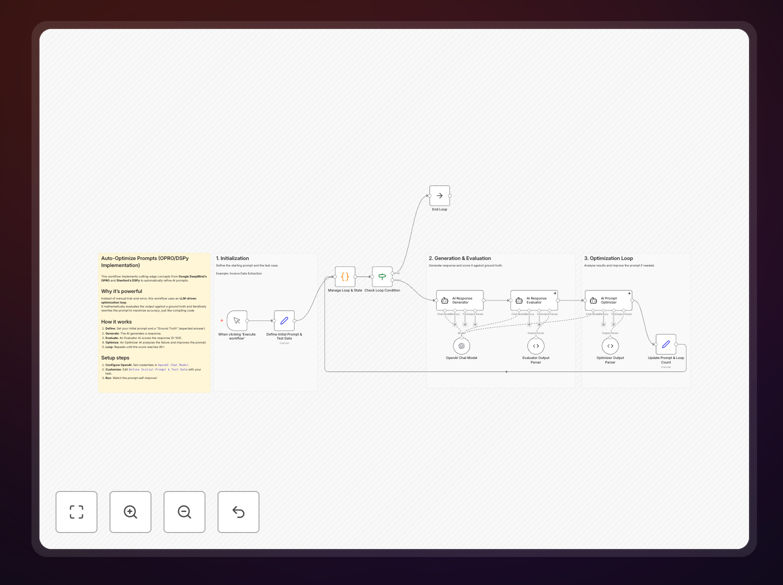

This automation solves the challenge of inconsistent AI outputs by implementing two cutting-edge optimization methodologies. Google DeepMind's OPRO (Optimization by PROmpting) technique uses the AI to optimize its own prompts through iterative refinement. Combined with Stanford's DSPy framework, it creates a systematic approach to prompt engineering that outperforms manual trial-and-error methods.

The workflow continuously evaluates prompt performance against your success metrics, automatically generating improved versions. This eliminates the hours typically spent manually testing different phrasings while achieving better results. Businesses using AI for content generation, data analysis, or customer interactions can achieve more accurate, consistent outputs with less effort.

How It Works

1. Performance Baseline Establishment

The workflow first evaluates your current prompts against key metrics like accuracy, relevance, and token efficiency. This establishes a quantitative baseline for improvement.

2. Iterative Optimization Cycles

Using OPRO methodology, the system generates multiple prompt variations and tests them against your success criteria. The top-performing versions become inputs for the next refinement cycle.

3. Structured DSPy Framework

Stanford's DSPy approach organizes the optimization process into measurable components, ensuring reproducible results across different use cases and AI models.

Who This Is For

This workflow delivers the most value for:

- Content teams needing consistent tone and quality across AI-generated materials

- Customer support managers aiming to improve chatbot response accuracy

- Data analysts requiring reliable AI-assisted insights from complex queries

- Marketing teams optimizing promotional copy generation

What You'll Need

- OpenAI API access with available credits

- Zapier account (free plan sufficient)

- Clear success metrics for your AI outputs

- Initial prompt examples to optimize

Quick Setup Guide

- Download the JSON template file

- Import into your Zapier account

- Connect your OpenAI API credentials

- Configure your success metrics and test cases

- Run initial optimization cycle

Key Benefits

30-50% improvement in output quality compared to manual prompt engineering, measured by your specific success metrics.

75% reduction in prompt engineering time by automating the trial-and-error process that typically consumes hours per week.

Consistent AI performance across different operators and use cases through standardized optimization methodology.

Cost-effective API usage as optimized prompts require fewer tokens and follow-up requests to achieve desired results.