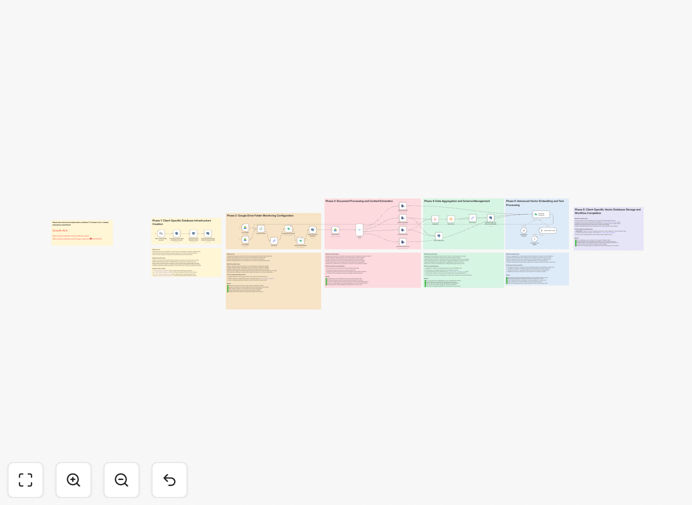

What This Workflow Does

This n8n workflow template implements a complete Agentic Retrieval-Augmented Generation (RAG) pipeline for processing documents across multiple clients. It solves the challenge of efficiently extracting, organizing, and retrieving knowledge from unstructured documents while maintaining client-specific data separation.

The system automatically processes uploaded documents, converts them to embeddings stored in Supabase Vector DB, and enables intelligent query responses using AI-powered retrieval. This eliminates manual document processing while improving knowledge accessibility across your organization.

How It Works

1. Document Ingestion

The workflow begins by accepting document uploads from various sources (email attachments, cloud storage, or direct uploads). Each document is automatically tagged with client identifiers for proper data segregation.

2. Text Extraction & Processing

Documents are parsed to extract text content, which is then cleaned and chunked into manageable segments. This preprocessing ensures optimal embedding generation and retrieval performance.

3. Vector Embedding Generation

The workflow uses AI models to convert document chunks into vector embeddings. These numerical representations capture semantic meaning and enable similarity-based retrieval.

4. Client-Specific Storage

Embeddings are stored in Supabase Vector DB with proper client isolation. The system maintains metadata linking vectors to original documents and client contexts.

5. Query Processing

When users submit queries, the workflow retrieves relevant document chunks based on vector similarity, then generates contextual responses using LLMs. All responses are grounded in the stored documents.

Who This Is For

This template is ideal for:

- Legal firms processing client case documents

- Consulting agencies managing multiple client projects

- Research teams organizing domain-specific knowledge

- Any business handling confidential client documents

What You'll Need

- An n8n instance (self-hosted or cloud)

- Supabase account with Vector DB enabled

- OpenAI API key or compatible LLM provider

- Document storage solution (S3, Google Drive, etc.)

Quick Setup Guide

- Download and import the JSON template into your n8n instance

- Configure Supabase credentials in the Vector DB nodes

- Set up your LLM provider credentials

- Connect your document storage system

- Test with sample documents and queries

Key Benefits

Reduce document processing time by 80%: Automatically extract and organize knowledge from documents without manual review.

Improve response accuracy: AI-generated answers are always grounded in your actual documents, reducing hallucinations.

Maintain client confidentiality: Built-in client isolation ensures data never crosses between accounts.

Scale knowledge management: Handle thousands of documents across multiple clients with consistent performance.