What This Workflow Does

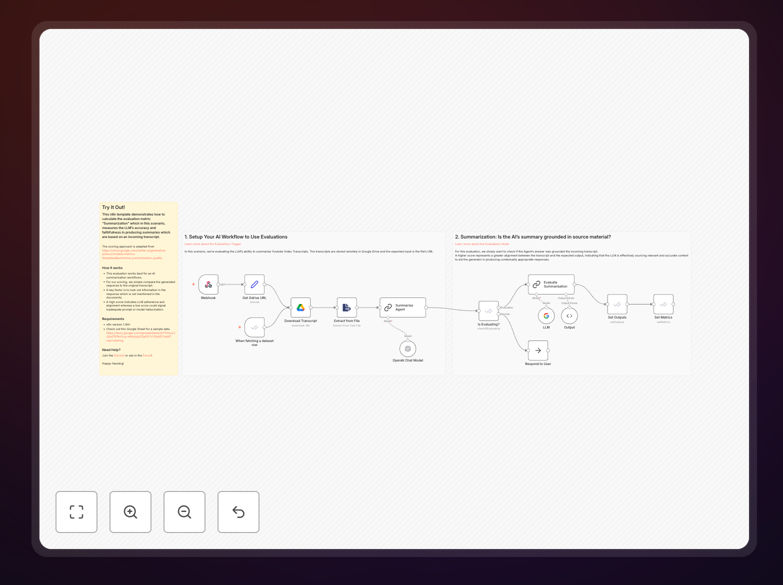

This n8n automation template solves a critical problem for businesses using AI to summarize content: how do you know if the AI is accurate? When you use AI to summarize meeting transcripts, YouTube videos, research papers, or customer conversations, you need confidence that the summary faithfully represents the original content without hallucinations or omissions.

The workflow automatically evaluates AI-generated summaries by comparing them against source transcripts. It calculates a quality score based on factual accuracy, completeness, and faithfulness to the original material. This gives you quantitative metrics to assess your AI's performance, identify when prompts need improvement, and ensure reliable automated summarization at scale.

Businesses using this template can automate quality control for AI content production, reducing manual review time by 60-80% while preventing costly errors from incorrect summaries. It's particularly valuable for content agencies, research teams, customer support departments, and any organization processing large volumes of information through AI.

How It Works

The evaluation follows an adapted version of Google's Vertex AI summarization quality metrics, providing standardized scoring that aligns with industry best practices.

Step 1: Input Processing

The workflow accepts source transcripts (from YouTube, meetings, documents) and AI-generated summaries. It can process multiple files simultaneously, making it scalable for batch operations.

Step 2: Comparative Analysis

The system compares each summary against its corresponding transcript, looking for key information matches, factual consistency, and potential hallucinations where the AI invents content not present in the source.

Step 3: Scoring Calculation

Based on the comparison, the workflow calculates a quality score from 0-100. High scores indicate strong LLM adherence and alignment with the source material, while low scores signal inadequate prompts or model hallucination.

Step 4: Results Delivery

Evaluation results are delivered to your preferred destination: Google Sheets for analysis, databases for tracking, or communication platforms like Slack/email for immediate alerts about low-quality summaries.

Who This Is For

This template is ideal for content creators, research analysts, customer experience teams, and business intelligence professionals who rely on AI summarization. Marketing agencies using AI to summarize campaign performance data, legal firms summarizing case documents, educational institutions processing lecture content, and product teams analyzing user feedback will find immediate value.

Teams that need to scale their AI content production while maintaining quality standards benefit most. If you're currently manually checking AI outputs or worrying about whether your automated summaries are accurate, this workflow provides the systematic quality assurance you need.

What You'll Need

- n8n instance (version 1.94 or higher) - either self-hosted or n8n.cloud

- AI model access - OpenAI, Google Gemini, or another LLM provider for generating summaries

- Source content - YouTube transcripts, meeting recordings, documents, or other text to summarize

- Destination for results - Google Sheets, database, or communication tool to receive evaluations

- Sample data - Initial transcripts and summaries to test the evaluation logic

Pro tip: Start with a small batch of manually reviewed summaries to establish your quality baseline. Use these to calibrate the evaluation thresholds in the workflow before scaling to full automation.

Quick Setup Guide

- Download and import the template JSON file into your n8n instance

- Configure AI connections by adding your API keys for OpenAI, Google Gemini, or your preferred LLM

- Set up input sources - connect YouTube, transcription services, or document repositories

- Configure output destinations - connect Google Sheets, databases, or communication tools

- Test with sample data - use the provided Google Sheet template with example transcripts

- Adjust scoring thresholds based on your quality requirements and initial test results

- Activate the workflow and begin processing your actual content

Key Benefits

Reduce manual review time by 60-80% while improving consistency. Automated evaluation provides standardized scoring that doesn't suffer from human fatigue or inconsistency across team members.

Prevent costly errors from AI hallucinations by catching inaccurate summaries before they reach decision-makers. This is especially critical for legal, medical, and financial applications where incorrect information has serious consequences.

Scale AI content production confidently with systematic quality control. As you increase the volume of automated summarization, the evaluation workflow ensures quality doesn't degrade with quantity.

Create feedback loops to improve AI performance by identifying patterns in low-scoring summaries. Use these insights to refine your prompts, choose better models, or adjust your source material preparation.

Generate quality metrics for reporting and optimization that demonstrate ROI on AI investments. Track improvement over time and make data-driven decisions about your AI strategy.