What This Workflow Does

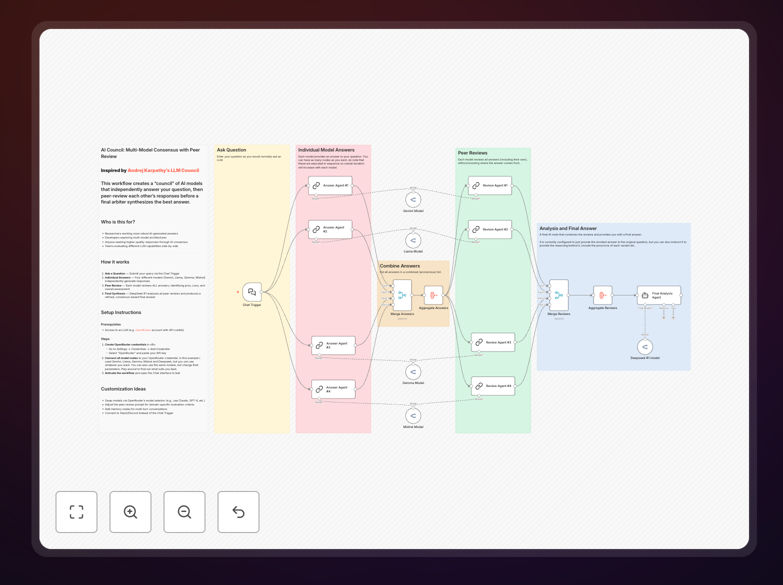

This workflow solves the problem of unreliable or biased AI-generated answers by creating a "council" of multiple AI models that independently respond to your question, then critically evaluate each other's responses before a final arbiter synthesizes the best possible answer. Inspired by Andrej Karpathy's LLM Council concept, this n8n implementation makes sophisticated multi-model consensus accessible without coding.

Traditional AI usage often relies on a single model's perspective, which can include biases, knowledge gaps, or hallucinations. This workflow mitigates those risks by leveraging diversity—different models (like Gemini, Llama, Gemma, and Mistral) bring different strengths, training data, and reasoning approaches. The peer review process further filters out weak arguments and identifies the most credible insights.

The business value is substantial: you get higher-quality answers for strategic decisions, reduced risk of acting on flawed AI advice, and a more systematic approach to leveraging AI for complex problem-solving. It's particularly valuable for research, competitive analysis, strategic planning, and any scenario where you'd normally consult multiple human experts.

How It Works

1. Question Submission

You submit your query through a simple chat interface or trigger. The workflow accepts any business question, from "What are the emerging trends in our industry?" to "How should we approach this competitive threat?"

2. Parallel Model Responses

Four different AI models independently generate answers to your question. Each model operates with its unique architecture and training, ensuring diverse perspectives. The workflow can be configured to use any combination of available models through services like OpenRouter.

3. Cross-Model Peer Review

Each model reviews all other models' answers, identifying strengths, weaknesses, logical flaws, and overall quality. This creates a matrix of evaluations where every response gets multiple critical assessments from different AI "peers."

4. Final Synthesis

A final arbiter model (like DeepSeek R1) analyzes all peer reviews and original answers to produce a refined, consensus-based final answer. This synthesis incorporates the best elements from each response while addressing the criticisms raised during peer review.

5. Delivery

The consensus answer is delivered back to you through your preferred channel, along with optional insights about how different models approached the question and what the key points of agreement and disagreement were.

Who This Is For

This workflow is ideal for businesses and professionals who rely on AI for critical thinking tasks. Researchers can use it to get more robust literature reviews or hypothesis generation. Strategy teams can employ it for market analysis and competitive intelligence. Product managers can leverage it for feature prioritization and user research synthesis.

Consultants and advisors will find it valuable for preparing comprehensive client recommendations. Content creators can use it to develop more nuanced perspectives on complex topics. Essentially, anyone who currently uses AI for more than simple factual queries and wants higher-quality, more reliable outputs will benefit from this consensus approach.

Pro tip: Use this workflow to prepare for important meetings where you need to anticipate different perspectives. It helps identify blind spots in your own thinking by simulating how various "experts" (the AI models) would approach the same problem.

What You'll Need

- n8n instance (self-hosted or cloud)

- OpenRouter account or access to multiple AI model APIs

- API credits for the AI services you plan to use

- Basic understanding of how to configure API credentials in n8n

- Clear questions that benefit from multiple perspectives

Quick Setup Guide

- Download the template using the button above and import it into your n8n instance.

- Create OpenRouter credentials in n8n Settings → Credentials → Add Credential → OpenRouter.

- Connect all AI model nodes to your OpenRouter credential by selecting each node and choosing your credential.

- Configure model selection in each node if you want to use different models than the default (Gemini, Llama, Gemma, Mistral, DeepSeek).

- Activate the workflow and test it using the Chat Trigger node interface or connect it to your preferred trigger (Slack, webhook, schedule).

- Customize prompts if needed, especially the peer review criteria to match your specific evaluation standards.

Key Benefits

Higher answer quality through diversity: By consulting multiple AI models with different training and architectures, you get a more comprehensive perspective than any single model can provide. Different models excel at different types of reasoning, and the consensus approach leverages these complementary strengths.

Reduced bias and hallucination risk: The peer review process acts as a quality control mechanism. When one model hallucinates or exhibits strong bias, other models typically identify and critique these flaws during peer review, preventing them from contaminating the final answer.

Cost-effective expertise simulation: This workflow simulates consulting a panel of experts at a fraction of the cost. While using multiple models increases API costs compared to a single query, it's dramatically cheaper than hiring human experts and provides 24/7 availability.

Configurable for specific needs: You can easily swap models, adjust the number of participants, modify peer review criteria, and connect the workflow to different triggers and outputs. This flexibility lets you tailor the system to your exact business requirements.

Transparent reasoning process: Unlike a black-box single answer, this workflow lets you see how different models approached the problem and what criticisms emerged during peer review. This transparency builds confidence in the final consensus and provides valuable insights about the question itself.