What This Workflow Does

This automation solves the critical problem of delayed awareness when website or application deployments fail. In modern development environments where deployments happen multiple times per day, teams can't afford to manually monitor every deployment status. This workflow automatically detects failed deployments and sends detailed alerts to your Slack channel.

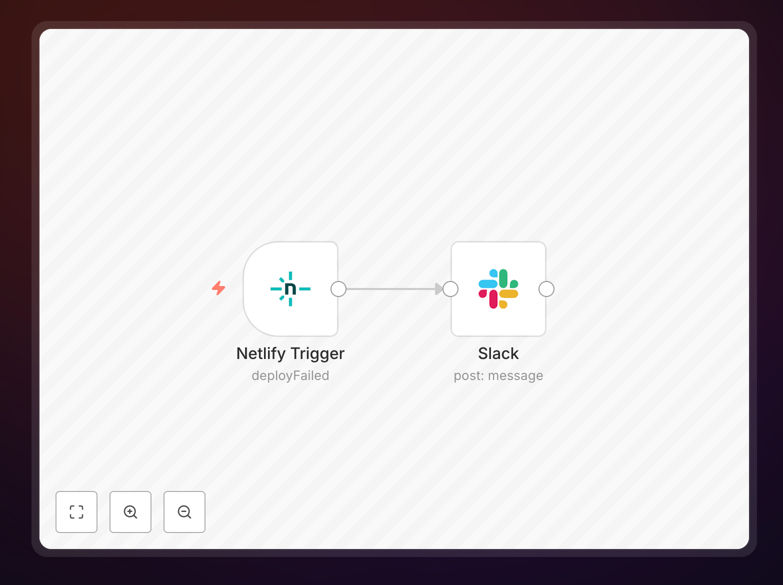

The solution integrates with popular deployment platforms like Netlify, Vercel, or AWS CodeDeploy to monitor deployment status. When a failure occurs, it immediately notifies the right team members with all necessary context—including error messages, deployment environment, and links to logs—accelerating incident response.

How It Works

1. Deployment Monitoring

The workflow starts by receiving webhook notifications from your deployment platform whenever a deployment completes (successfully or not). This real-time trigger ensures immediate awareness of deployment status changes without polling or manual checks.

2. Failure Detection

The workflow analyzes the deployment status payload to determine if the deployment failed. It checks for specific error codes or failure states that different platforms might use, ensuring reliable detection across various CI/CD tools.

3. Alert Generation

When a failure is detected, the workflow compiles all relevant information—including deployment ID, timestamp, environment, error messages, and any available logs. It formats this into a clear, actionable Slack message with proper formatting and emphasis on critical details.

4. Notification Delivery

The final step sends the formatted alert to your designated Slack channel, tagging relevant team members or channels based on the severity or environment of the failed deployment. The message includes quick action buttons where supported by Slack.

Pro tip: Configure your deployment platform to include commit messages and author information in webhook payloads—this helps your team quickly identify who might best address the failure.

Who This Is For

This workflow is essential for development teams practicing continuous deployment, DevOps engineers managing production environments, and technical leads responsible for system reliability. It's particularly valuable for:

- Teams deploying to production multiple times per day

- Organizations with microservices architectures where deployments happen frequently

- Companies with strict SLA requirements for uptime and incident response

- Distributed teams needing centralized visibility into deployment status

What You'll Need

- An n8n instance (cloud or self-hosted)

- A Slack workspace with permissions to create webhooks

- A deployment platform that supports webhook notifications (Netlify, Vercel, AWS CodeDeploy, etc.)

- The URL of your team's Slack channel for alerts

Quick Setup Guide

- Download the workflow template file

- Import it into your n8n instance

- Configure the webhook trigger with your deployment platform

- Set up your Slack webhook connection

- Test with a failed deployment to verify notifications

- Adjust message formatting and routing as needed

Key Benefits

Reduce downtime by catching failures immediately - The average time to detect deployment failures drops from potentially hours to seconds, minimizing user impact.

Standardize alert formatting across your team - Everyone receives consistent, well-structured notifications with all necessary context, eliminating confusion.

Integrate with existing workflows - Since alerts come through Slack where your team already works, there's no new tool to monitor or learn.

Scale with your deployment frequency - The automated workflow handles any number of deployments without additional monitoring overhead.